While most iOS Developers around the globe are busy learning Apple’s new programming language Swift or playing with early versions of iOS8 and Yosemite, I’m deeply involved in something much less cutting edge. In fact it’s from over 30 years ago, and it’s courtesy of Microsoft:

I’m having fun getting back into BASIC 2.0 as featured on the legendary Commodore 64 (or C64 or CBM 64).

This was my first computer, and I’ll never forget it. German computer magazine “64er” dubbed it the VC-64, or “Volks Computer” (because Commodore’s previous machine was called the VC-20 or VIC-20). It was huge everywhere, but particularly in Germany it was just THE machine to have.

Sure, there was the Amstrad CPC664 and 464 (which were re-branded as Schneider) or the ZX-81 and Spectrum, but they were somewhere in that 5% category of “other home computers”. We never had the BBC Micro – for obvious reasons, and none of my friends could afford anApple II.

I no longer own the hardware, but some of that early day knowledge is still in me, together with many burning questions that have never been answered. There’s so much I always wanted to know about the C64, and so much I wanted to do with it: write programmes, learn machine language, and generally use it for development. I had no idea that there was such a thing as a Programmer’s Reference or developer tools. Time to get back into it!

Today we have wonderful emulators such as VICE (the Versatile Commodore Emulator) and it’s just like sitting down with my old computer again, on modern day hardware. I’m even doing it on a plastic Windows laptop for a touch of antiqueness (if I don’t get too annoyed with that).

Don’t ask me why this piece of computer history has become such an obsession with me over the last couple of weeks. I feel that for some reason it fits in with all this high-end cutting edge development I’m doing and rekindles me with how all this super technology started: with cheap plastic that was to change all our lives forever.

I remember the questions from members of my family who had not jumped on the computer bandwagon: “So what do you actually DO with a computer?” – and I guess today as much as back then you would answer, “What am I NOT doing with a computer anymore?”

The 8 bit “home computer” revolution started all that, including the stuff we use every day and half-heartedly take for granted – like downloading a PDF on the beach at 100Mbps, while sending videos to loved ones across the globe in half a second.

Before I get too old to remember, let me see if I can piece the story of “Me and The Machine” together (before my brain inevitably turns into that of a retired old gentleman yelling at the neighbour’s dog in a foreign accent).

Read more

I have several Amazon accounts: one in the US, one in the UK, and one ein Germany. Every now and again I de-register one of my Kindles from one account and register it with another one. Depends on what content I’d like to read and on which account it’s available.

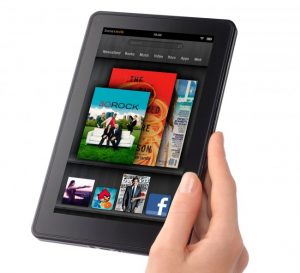

I have several Amazon accounts: one in the US, one in the UK, and one ein Germany. Every now and again I de-register one of my Kindles from one account and register it with another one. Depends on what content I’d like to read and on which account it’s available. Back in 2011 I bought a first generation Kindle Fire in the US. It hadn’t been released anywhere else, and this device started the whole Kindle Tablet business for Amazon.

Back in 2011 I bought a first generation Kindle Fire in the US. It hadn’t been released anywhere else, and this device started the whole Kindle Tablet business for Amazon.